PLOS ONE: Interrater agreement of two adverse drug reaction causality assessment methods: A randomised comparison of the Liverpool Adverse Drug Reaction Causality Assessment Tool and the World Health Organization-Uppsala Monitoring Centre system

PLOS ONE: Digital versus analogue record systems for mass casualty incidents at sea—Results from an exploratory study

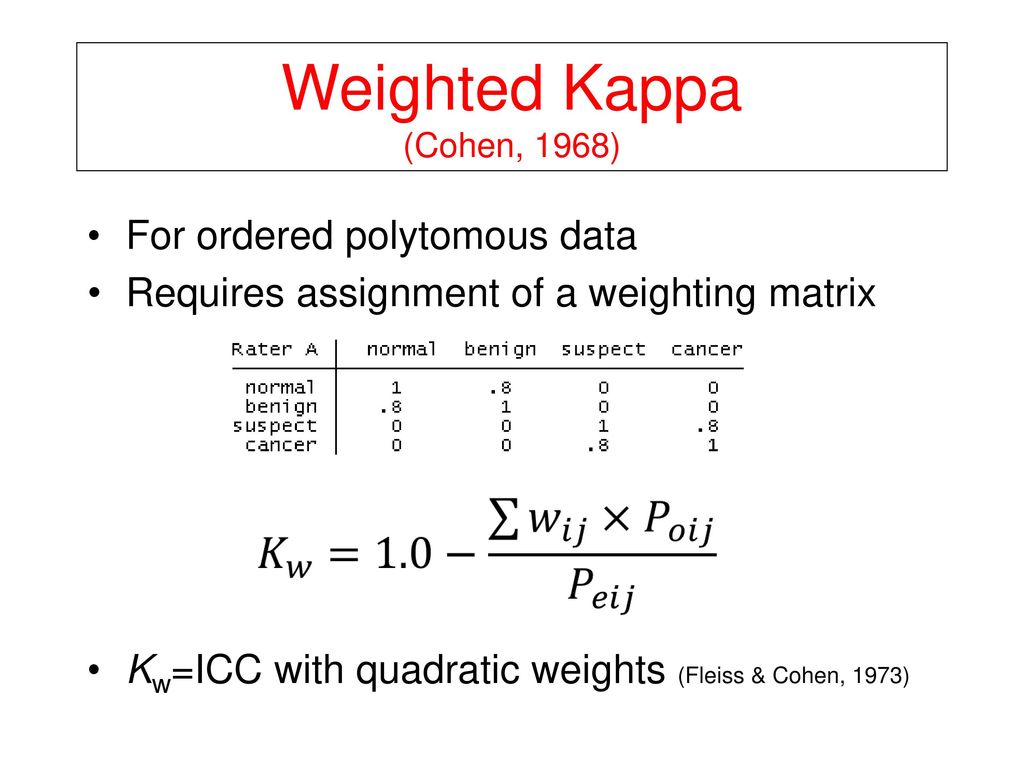

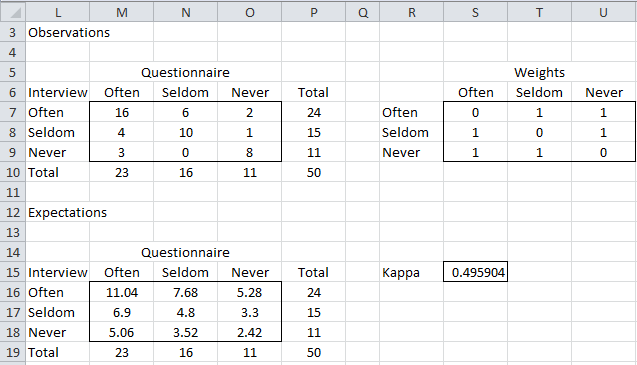

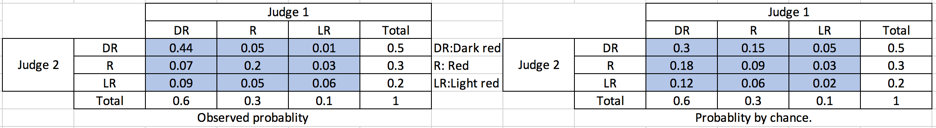

Kappa Coefficient for Dummies. How to measure the agreement between… | by Aditya Kumar | AI Graduate | Medium

PLOS ONE: Capturing the Context of Maternal Deaths from Verbal Autopsies: A Reliability Study of the Maternal Data Extraction Tool (M-DET)

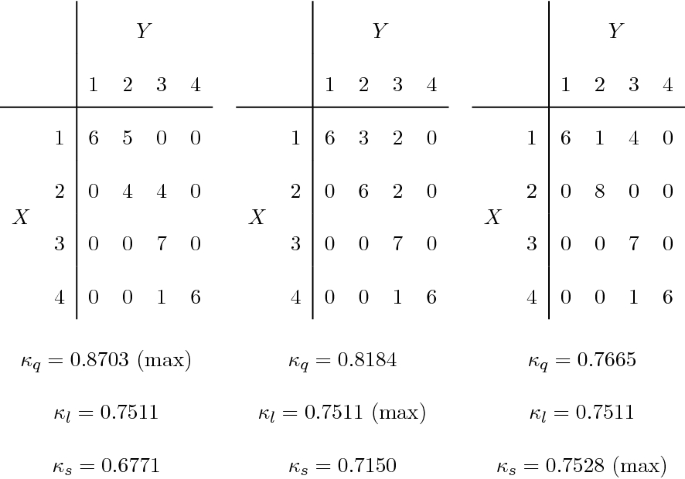

![PDF] Weighted kappa is higher than Cohen's kappa for tridiagonal agreement tables | Semantic Scholar PDF] Weighted kappa is higher than Cohen's kappa for tridiagonal agreement tables | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/b75cbafeb417eb3fdeb52d9fc4c6dbf30200c8b5/3-Table2-1.png)